IBL News | New York

Generative AI startup Cohere, which is developing a model ecosystem for the enterprise, raised $270 million as part of its series C round. A mix of VC and strategic investors, including Nvidia, Oracle, and Salesforce Ventures, among others, participated in the round.

This Toronto – based company has raised a total of $445 million to date. Only OpenAI ($11.3 billion) and Anthropic ($450 million) have raised more, ahead of rivals Inflection AI ($225 million) and Adept ($415 million). This influx of money has resulted in a valuation of around $2.1 billion, according to Bloomberg.

Founded in 2019 and with a workforce of 180 employees, Cohere builds, trains, and customizes large language models for enterprise customers. Corporations can use their proprietary data models — which can be expensive to train — to do things like summarize customer emails or help write website copy.

Nvidia CEO Jensen Huang said of Cohere, “Their service will help enterprises around the world harness these capabilities to automate and accelerate.”

Cohere’s platform is cloud agnostic, allowing companies to use their preferred cloud provider to increase data privacy and make implementation simpler.

The platform can be deployed inside public clouds (e.g., Google Cloud, Amazon Web Services), a customer’s existing cloud, virtual private clouds, or on-site.

The startup works with companies like Jasper and HyperWrite for copywriting generation tasks like creating marketing content, drafting emails, and developing product descriptions. Also, it collaborates with LivePerson, the conversational marketing company, to build fine-tuned LLMs to improve explainability, as well as with several news outlets.

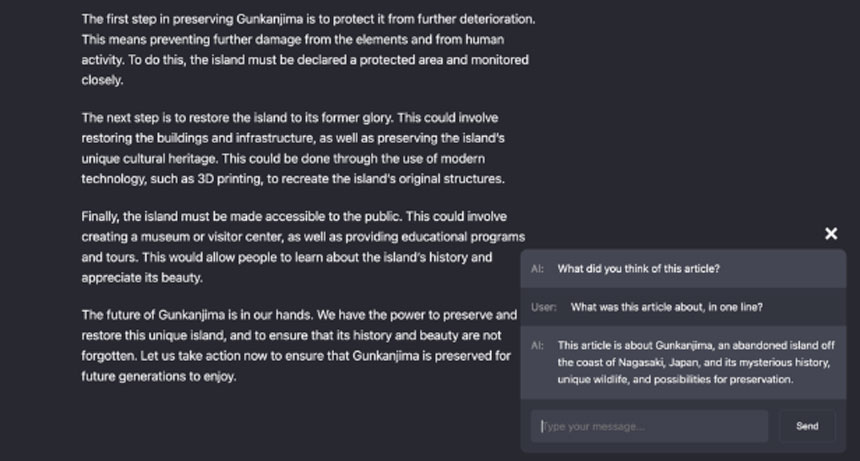

Cohere said it sees “search and retrieval” as the next core area of growth, so models or chatbots have the ability to expand on their knowledge base and search the web for information that’s relevant to a query.

The President and COO, Martin Kon, told TechCrunch: “Today, chatbots don’t have access to the world. They don’t know about what happened ten minutes ago. They have to memorize everything within themselves, and they only have a memory of what they saw during training. With search and retrieval, you can require a model to cite sources, so users don’t need to blindly trust a model; everything links out to a site that you can verify and fact-check.”

Cohere plans to build additional models that can take action and work for customers, like booking a flight, scheduling a meeting, or filing an expense report on a person’s behalf. In that way, it’s chasing after competitors like Adept, Inflection, and OpenAI, all of which are building systems to connect AI with third-party apps, services, and products.

![Google Introduced Its Newest LLM ‘PaLM 2’. It Includes Bard, ChatGPT’s Strongest Competitor Yet [Video]](https://cms.iblnews.org/wp-content/uploads/2023/05/newpalm2includingbard.jpg)

![Sal Khan Demoed Khanmigo AI Tutor Described As “A Teacher’s Aide on Steroids” [Video]](https://cms.iblnews.org/wp-content/uploads/2023/04/khanacademykhanmigo.jpg)