IBL News | New York

Anthropic accidentally leaked to the public part of its Claude Code, its agentic AI, yesterday.

A 59.8 MB JavaScript source map file (.map), intended for internal debugging, was inadvertently included in version 2.1.88 of the @anthropic-ai/claude-code package on the public npm registry, pushed live yesterday.

“No sensitive customer data or credentials were involved or exposed,” an Anthropic spokesperson said. “This was a release packaging issue caused by human error, not a security breach. We’re rolling out measures to prevent this from happening again.”

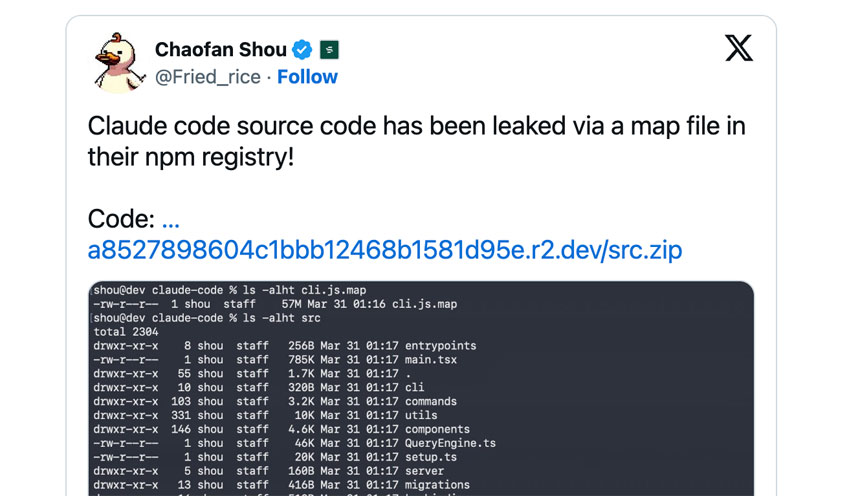

By 4:23 am ET, Chaofan Shou (@Fried_rice), an intern at Solayer Labs, broadcast the discovery on X, including a direct download link to a hosted archive. The post on X generated over 21 million views. Thousands of developers analyzed the code across GitHub.

For Anthropic, a company with reported annualized revenue of $19 billion as of March 2026, the leak was a strategic hemorrhage of intellectual property.

Claude Code, which has seen massive adoption over the last year, helps software developers build features, fix bugs, and automate tasks.

Claude Code solved “context entropy”—the tendency for AI agents to become confused or hallucinate as long-running sessions grow more complex.

The leaked source revealed a sophisticated, three-layer memory architecture that moves away from traditional “store-everything” retrieval.

As analyzed by developers such as @himanshustwts, the architecture employs a “Self-Healing Memory” system.

At its core is MEMORY.md, a lightweight index of pointers (~150 characters per line) that is perpetually loaded into the context. This index does not store data; it stores locations.

For competitors, the “blueprint” is clear: build a skeptical memory. The code confirms that Anthropic’s agents are instructed to treat their own memory as a “hint,” requiring the model to verify facts against the actual codebase before proceeding.

By exposing the “blueprints” of Claude Code, Anthropic has handed researchers and bad actors a roadmap to bypass security guardrails and permission prompts.

Because the leak revealed the exact orchestration logic for Hooks and MCP servers, attackers can now design malicious repositories specifically tailored to “trick” Claude Code into running background commands or exfiltrating data before you ever see a trust prompt.

Claude code source code has been leaked via a map file in their npm registry!

Code: https://t.co/jBiMoOzt8G pic.twitter.com/rYo5hbvEj8

— Chaofan Shou (@Fried_rice) March 31, 2026