IBL News | New York

IBM last week released, under the Apache 2.0 license, four new Granite 4.0 Nano models, designed to be highly accessible and well-suited for developers building applications on consumer hardware, without relying on cloud computing.

With these models, IBM is entering a crowded and rapidly evolving market of small language models (SLMs), competing with offerings like Qwen3, Google’s Gemma, LiquidAI’s LFM2, and Mistral’s dense models in the sub-2B parameter space.

With this release, IBM is positioning Granite as a platform for building the next generation of lightweight, trustworthy AI systems.

The 350M variants can run comfortably on a modern laptop CPU with 8–16GB of RAM, while the 1.5B models typically require a GPU with at least 6–8GB of VRAM for smooth performance.

This is a fraction of the size of their server-bound counterparts from companies like OpenAI, Anthropic, and Google.

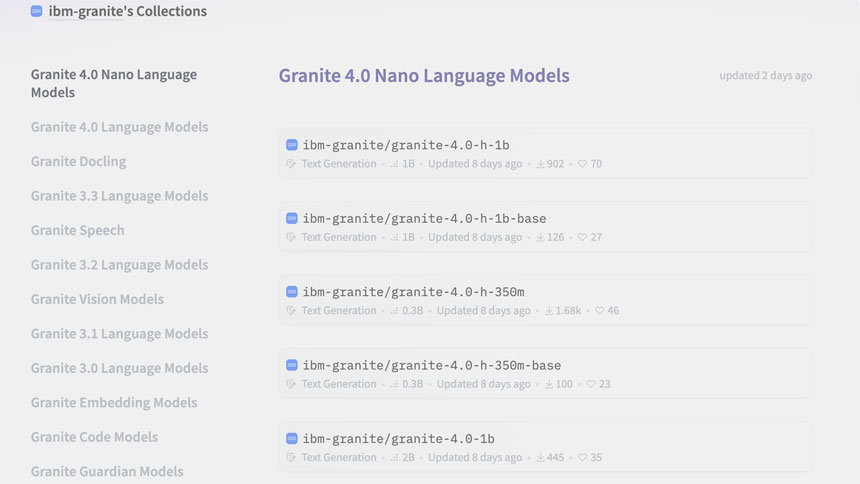

This Granite 4.0 Nano family includes four open-source models now available on Hugging Face:

- Granite-4.0-H-1B (~1.5B parameters) – Hybrid-SSM architecture

- Granite-4.0-H-350M (~350M parameters) – Hybrid-SSM architecture

- Granite-4.0-1B – Transformer-based variant, parameter count closer to 2B

- Granite-4.0-350M – Transformer-based variant

Overall, the Granite-4.0-1B achieved a leading average benchmark score of 68.3% across general knowledge, math, code, and safety domains.

For developers and researchers seeking performance without overhead, the Nano release means they don’t need 70 billion parameters to build something powerful.