IBL News | New York

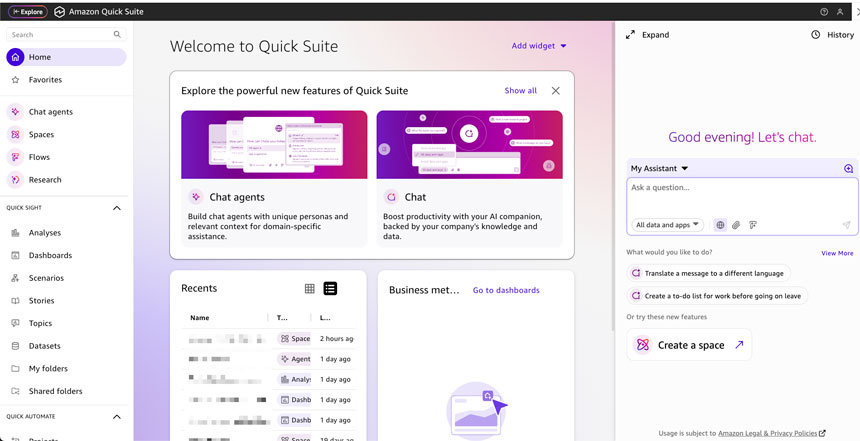

OpenAI announced yesterday that it has 1 million business customers worldwide using ChatGPT for Work, either directly or through its developer platform.

Organizations related to areas such as financial services, healthcare, and retail are among the most active. Consumer adoption is also at high rates, with 800 million users weekly.

The San Francisco-based lab is set to generate $13 billion in revenue this year as it continues to expand sales.

To support the enterprise acceleration, OpenAI launched a new wave of tools and integrations, such as:

- Company knowledge consolidates all the context from connected apps (Slack, SharePoint, Google Drive, GitHub, Canva, Figma, Zillow, Spotify, among others) into ChatGPT.

- Codex model for code generation, refactoring, and workflow automation.

- AgentKit allows users to create and build enterprise agents.

- Multimodal models, such as the Image Generation API, Sora 2, gpt-realtime, and Realtime API to build production voice agents.

- Databricks has made OpenAI models available natively on its stack.

- The Agentic Commerce Protocol (ACP) enables the creation of conversational commerce experiences in ChatGPT. Shopify, Etsy, Walmart, PayPal, and Salesforce are among the companies utilizing this protocol.

OpenAI quoted a recent Wharton study to highlight that 75% of enterprises report a positive ROI, and fewer than 5% report a negative return. “When AI is deployed with the right use case and infrastructure, teams see real results,” said the firm.

This week, too, OpenAI agreed to pay Amazon.com $38 billion for computing power in a multiyear deal. That marks the first partnership between the startup and the cloud company.

![OpenAI Released Apps that Work Inside ChatGPT and an SDK [Video]](https://cms.iblnews.org/wp-content/uploads/2025/10/openaieventday.jpg)

![Apple Marketed Its New iPhones As a Best-In-Class Hardware, Not As an AI Device Maker [Video]](https://cms.iblnews.org/wp-content/uploads/2025/09/iPhoneair.jpg)