IBL News | Nashville, Tennessee

“In higher education, we face today skepticism and scrutiny, and current developments are testing us,” said Dr. John O’Brien, President and CEO at Educause, during the opening talk of the annual conference, which took place this week in Nashville, Tennessee. “The work we do, together, has never been more important.”

John O’Brien also introduced the winners of the 2025 Award Program:

Leadership Award:

• Elias G. Eldayrie, Senior VP and CIO at University of Florida

• Helen Norris, Former CIO and Vice President for Information Technology at Chapman University

Organizational Culture Award:

Liv Gjestvang, Vice President and CIO at Denison University

Rising Star Award:

Michael McGarry, Academic Technology Lead and LMS Administrator at California State University, Channel Islands

Community Leadership Award:

David Sherry, Former CIO at Princeton University

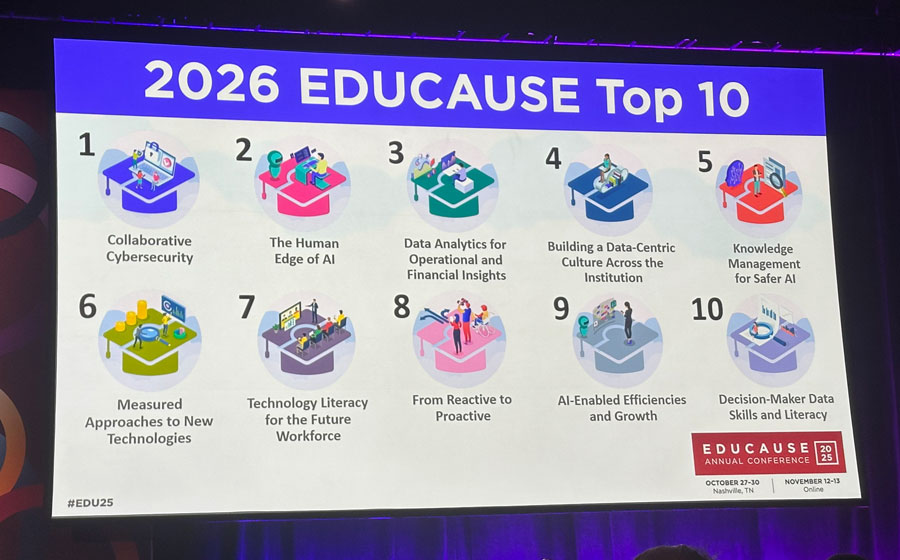

The next day, the President and CEO at Educause, during the presentation of the 2026 Top 10 report, advocated for clarity, resilience, and connection among institutions as they navigate uncertainty.

Also on Wednesday, in conversation with reporters (including IBL News), Dr. John O’Brien (pictured) said, “We are at an inflection point and educators want to come together.”