IBL News | New York

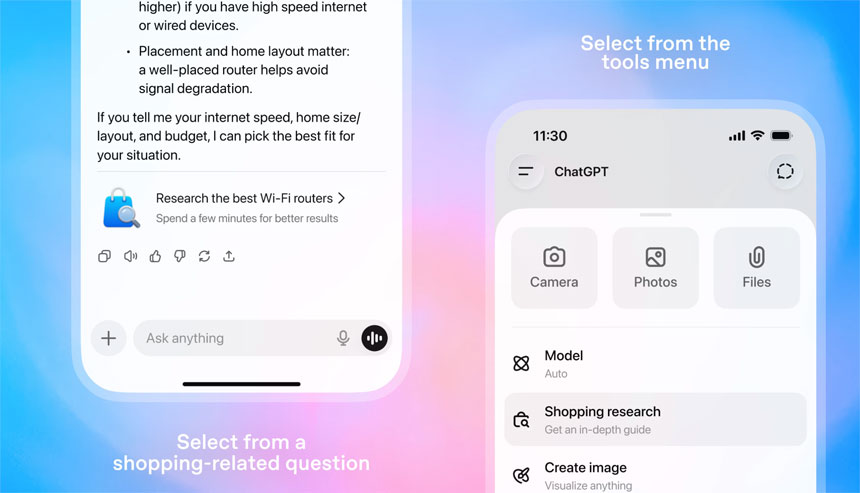

OpenAI introduced shopping research to all users this month. Released for the holiday season, the feature has been post-trained on GPT-5-Thinking mini with reinforcement learning for shopping tasks.

Essentially, ChatGPT conducts deep research for users across the Internet, including reviews, comments, and high-quality sources, helping them find the right products without sifting through multiple sites. The model might ask clarifying questions.

The users just describe what they are looking for, i.e., “Find an affordable electric bike that can be folded and stored in a small apartment.”

The chatbot considers users’ past conversations and their memory graph to deliver a personalized buyer’s guide in minutes.

The company ensured that this tool “performs especially well in detail-heavy categories like electronics, beauty, home and garden, kitchen and appliances, and sports and outdoor.”

For simple shopping questions, such as checking a price or confirming a feature, a regular ChatGPT response is quick.

When the user wants in-depth shopping research, such as comparisons, constraints, and trade-offs, the model takes a few minutes to provide a more detailed, well-researched answer.

The company trained the model to read trusted sites, cite reliable sources, and synthesize information to produce high-quality product research. It is also designed to be an interactive experience that can update and refine its research in real time, adjusting to user product preferences.

To purchase an item, they can click through to the retailer’s site. In the future, OpenAI plans to offer merchants in Instant Checkout the option to purchase directly through ChatGPT for merchants who are part of Instant Checkout.