IBL News | New York

Anthropic, the company behind the AI chatbot Claude, announced the creation of a Higher Education Advisory Board made up of academic leaders, along with three new AI Fluency courses.

This Higher Education Advisory Board will be chaired by Rick Levin, who previously led Yale University and Coursera. He said, “Our role is to advise the company as it develops ethically sound policies and products that will enable learners, teachers, and administrators to benefit from AI’s transformative potential while upholding the highest standards of academic integrity and protecting student privacy.”

Other Board members come from academia as well:

- David Leebron, Former President of Rice University.

- James DeVaney, Special Advisor to the President, Associate Vice Provost for Academic Innovation, and Founding Executive Director of the Center for Academic Innovation at the University of Michigan.

- Julie Schell, Assistant Vice Provost of Academic Technology at the University of Texas, Austin.

- Matthew Rascoff, Vice Provost for Digital Education at Stanford University.

- Yolanda Watson Spiva, President of Complete College America.

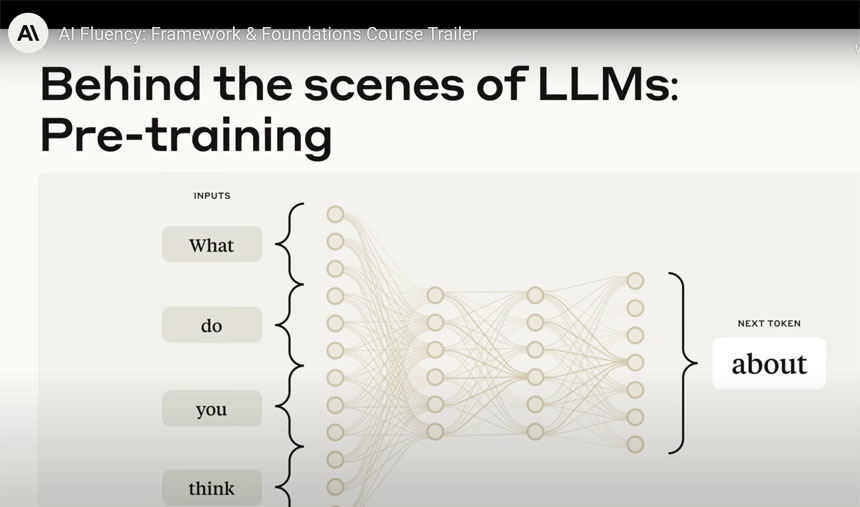

Anthropic has also developed three new courses that build on its existing AI Fluency course. These classes are designed to address the need for practical frameworks for thoughtful AI integration.

Each course, co-developed with Professor Rick Dakan of Ringling College of Art and Design and Professor Joseph Feller of University College Cork, is available under a Creative Commons license, so any institution can adapt them.

• AI Fluency for Educators helps faculty integrate AI into their teaching practice, from creating materials and assessments to enhancing classroom discussions. Built on experience from early adopters, it shows what works in real classrooms.

• AI Fluency for Students teaches responsible AI collaboration for coursework and career planning. Students learn to work with AI while developing their own critical thinking skills, and write their own personal commitment to responsible AI use

• Teaching AI Fluency supports educators who want to bring AI literacy to their campuses and classrooms. It includes frameworks for instruction and assessment, plus curriculum considerations for preparing students for a more AI-enhanced world.

Anthropic is not alone in targeting higher education. OpenAI launched ChatGPT Edu, a version of its chatbot customized for universities. It includes administrative controls, enterprise-grade authentication, and features like “Study Mode,” which walks students through problems step by step.

Highlighting its “commercial data protection” framework, Microsoft embedded Copilot for Education into Office 365.

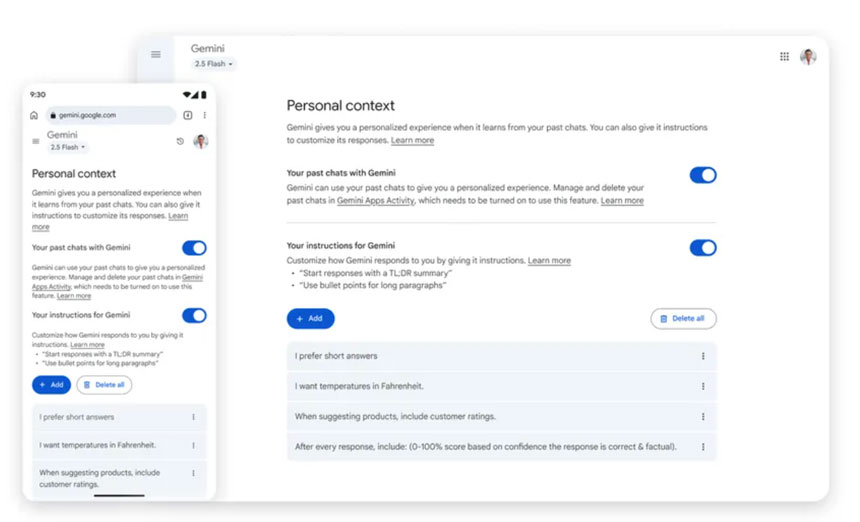

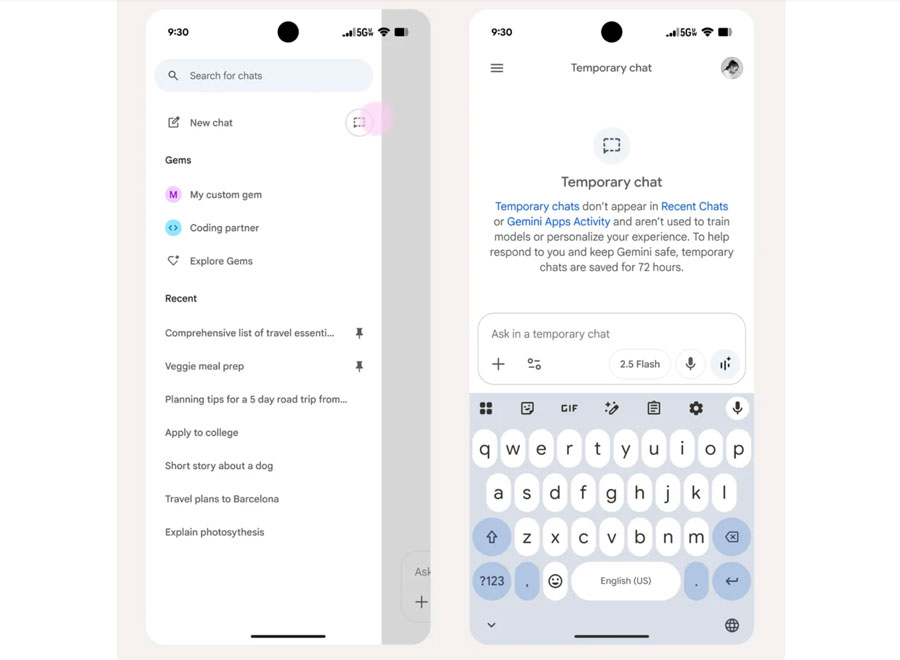

Google doubled down on its education footprint with Gemini in Classroom and Gemini for Education, designed to help teachers generate differentiated materials and give students tutoring experiences.