IBL News | New York

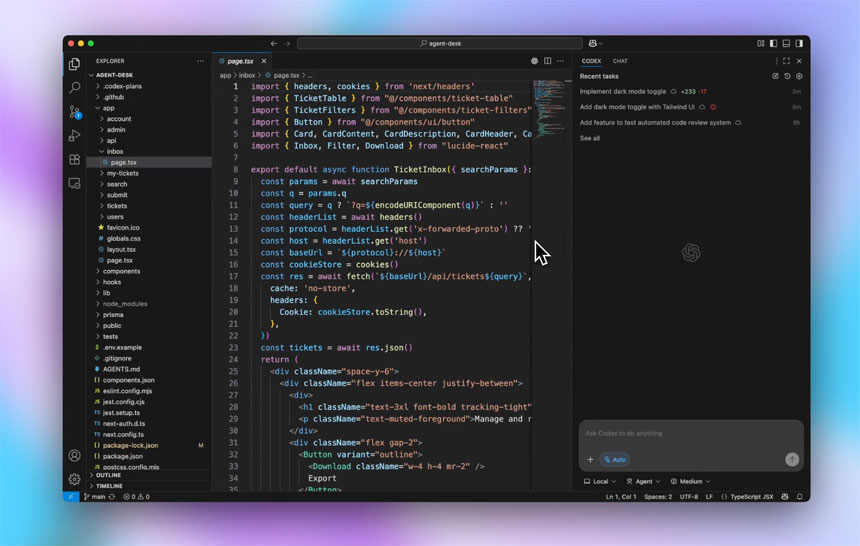

OpenAI released yesterday GPT-5-Codex, a version of GPT-5 optimized for its AI coding agent, “performing better at real-time collaboration and tackling tasks independently anywhere you develop, whether via the terminal, IDE, web, or even your phone.”

The update is part of OpenAI’s effort to compete better with other AI coding tools, such as Claude Code, Windsurf, GitHub Copilot, and Cursor (with $500 million in ARR).

The San Francisco-based research lab first launched Codex CLI in April and Codex web in May.

Codex now works where you develop—in your terminal or IDE, on the web, in GitHub, and even in the ChatGPT iOS app. Codex is included with ChatGPT Plus, Pro, Business, Edu, and Enterprise plans.

“It performs better on agentic coding benchmarks,” and “its code review capability can catch critical bugs before they ship,” explained the startup.

GPT-5-Codex can spend anywhere from a few seconds to seven hours on a coding task.

The new model is now rolling out in Codex products — which can be accessed via a terminal, IDE, GitHub, or ChatGPT — to all ChatGPT Plus, Pro, Business, Edu, and Enterprise users.

OpenAI says it plans to make the model available to API customers in the future.

![Apple Marketed Its New iPhones As a Best-In-Class Hardware, Not As an AI Device Maker [Video]](https://cms.iblnews.org/wp-content/uploads/2025/09/iPhoneair.jpg)

![Tech CEOs Praised President Trump at White House Dinner [Video]](https://cms.iblnews.org/wp-content/uploads/2025/09/techleaderswithtrump.jpg)